Why digital identity suddenly matters a lot more

Gen AI has made faking almost anything—a person’s voice, a government ID, a corporate email, even a long chat history—cheap and convincing.

For technology leaders, that changes the shape of almost every digital interaction:

- Who is on the other side of this request?

- Did this data really come from the system or person it claims to?

- Can this AI agent safely act on behalf of my customer or my organization?

Passwords, SMS OTPs, and ad‑hoc KYC flows were already creaking. In a world of hyper‑realistic deepfakes and autonomous agents, they are nowhere near enough.

What we need is a stronger trust fabric built on cryptographically anchored identity: credentials, wallets, and institutions working together.

From “login” to verifiable identity

Most organizations still treat identity as “whatever shows up in my IdP plus some KYC files in a back office.” That model breaks down when:

- Attackers can create hundreds of synthetic personas in minutes.

- Employees, customers, and partners are using AI agents to act on their behalf.

- Regulatory expectations around fraud, explainability, and data lineage are rising.

A more resilient approach centers on three building blocks:

- Credentials: Portable, cryptographically signed attestations about someone or something.

- Wallets: Secure containers where people, organizations, and agents store and present those credentials.

- Institutions: Trusted issuers and verifiers that anchor those credentials in law, regulation, and liability.

Together, these shift the trust question from “Do I trust this session?” to “Do I trust the verified attributes and the issuer standing behind them?”

Credentials: the new trust primitives

Think of digital credentials as machine‑checkable claims. They might represent:

- Person attributes: legal name, age, residency, employer, role.

- Organizational attributes: regulated entity status, licenses, risk rating.

- Device and agent attributes: hardware attestation, model version, allowed actions, approval limits.

In the Gen AI era, the most important properties are:

-

Cryptographic binding

Each credential is signed by an issuer key and bound to a subject identifier (person, organization, device, or agent). Verifiers can mathematically check integrity and provenance. -

Selective disclosure

To minimize data leakage, holders should be able to reveal only what’s needed (e.g., “over 18” instead of full DOB). This is crucial when AI agents are routinely passing credentials around. -

Revocation and expiry

When people leave a company, devices are compromised, or policies change, you must be able to revoke or short‑lived credentials quickly and have that reflected in verification decisions.

Strategic implication for Leaders: design your systems so that “What do I need to trust here?” is answered with a small, explicit set of credentials and issuers—not with a tangle of static roles and brittle KYC scripts.

Wallets: where identity lives (for humans and agents)

If credentials are the new trust primitives, wallets are how they are used in practice.

We should expect three classes of wallets to coexist:

- User wallets

Apps or system components where humans hold government IDs, bank attestations, employment credentials, and more. These may be:- Custodial (managed by an institution) or non‑custodial (user controls keys).

- Integrated into mobile OS, browser, or super‑apps.

- Enterprise wallets

Where organizations hold:- Certificates asserting regulatory status and licenses.

- Delegation credentials (“this business unit can sign contracts up to $X”).

- Keys and policies for their AI agents.

- Agent wallets

Where AI systems hold:- Proof they are authorized to act for a person or organization.

- Scoped credentials defining their allowed operations and limits.

- Audit‑friendly traces of what they presented and when.

Key design requirements:

- Strong key management: Hardware‑backed storage where possible, plus recovery flows that don’t undermine security (e.g., social recovery or institutional guardians rather than simple email resets).

- Policy‑aware presentation: Wallets should automatically enforce organizational and regulatory policy about which credentials can be presented to which verifiers in which jurisdictions.

- Auditability: Especially for enterprise and agent wallets, you need a tamper‑evident log of credential usage to satisfy regulators and internal audit.

Strategic implication: Plan for wallets not as a niche feature, but as a core security and UX primitive—similar in importance to SSO today.

Institutions: who gets to say what’s true

Cryptography can tell you that a credential is valid and unmodified. It cannot tell you whether the claim is meaningful or legally recognized. That’s where institutions come in.

In practice, you’ll rely on three overlapping layers:

-

Regulated authorities

Governments, regulators, banks, insurers, and professional bodies issuing credentials that have legal standing. - Ecosystem trust frameworks

Sector or region‑specific schemes that define:- Which issuers are recognized.

- What schemas and assurance levels mean.

- How liability is shared when something goes wrong.

- Enterprise trust policies

Your own interpretation of risk:- Which issuers you accept for which use cases.

- How to combine multiple credentials into a decision.

- How to factor in behavior, device posture, and anomaly signals.

In the Gen AI era, institutional trust becomes programmable:

- You encode your trust policy in rules engines and access control systems.

- You can simulate impact when a new issuer is added or an ecosystem rule changes.

- You can enforce differentiated trust for humans, devices, and AI agents.

Strategic implication: treat “trust policy” as a first‑class asset—versioned, tested, and governed—rather than as scattered config files and ad‑hoc business rules.

Where Gen AI breaks traditional trust models

Gen AI doesn’t just make phishing emails better. It erodes three pillars many systems quietly depend on:

-

Biometrics as a silver bullet

Voice, face, and even behavioral biometrics can now be synthesized or replayed. Alone, they are not enough to anchor high‑value actions. -

Document authenticity checks

AI can generate realistic IDs, bank statements, and utility bills. Identity proofing must move from “looks plausible” toward “cryptographically verifiable and issuer‑backed.” -

Human‑in‑the‑loop as the last line of defense

Humans are now assisted (and sometimes overwhelmed) by AI. The person “reviewing and approving” might be skimming summaries created by an agent that an attacker partially controls.

To respond, you need to:

- Push trust deeper into cryptographic proofs and issuer accountability.

- Design flows where no single human or AI agent can subvert critical controls.

- Build continuous verification: identity and trust aren’t one‑time events at onboarding; they’re ongoing signals.

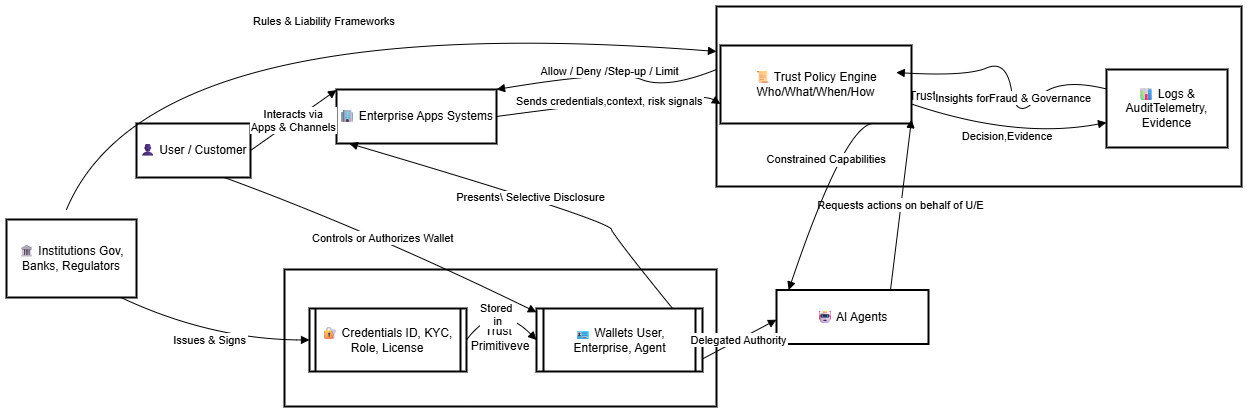

A reference pattern: credential‑centric decisioning

A practical architecture many organizations are converging on looks like this:

- 1. Identify what must be trusted

For each critical journey (e.g., “open account,” “approve payment,” “publish code,” “run high‑risk AI action”), document:- The subject (person, device, or agent).

- The key attributes you need to trust.

- The minimum assurance level and regulatory requirements.

- 2. Map to credentials and issuers

Decide:- Which credentials express those attributes.

- Which institutions are acceptable issuers.

- How long those credentials remain valid.

- 3. Implement wallets and integration points

- Integrate user and enterprise wallets into your apps (mobile, web, internal tools).

- Expose verification APIs that accept credentials and return normalized trust signals to downstream systems.

- 4. Encode trust policy

- Use a policy engine to combine credentials, device posture, behavioral analytics, and context into decisions:

- Allow, deny, step‑up verification, route for review, or limit AI agent capabilities.

- Version and test these policies like code.

- Use a policy engine to combine credentials, device posture, behavioral analytics, and context into decisions:

- 5. Instrument and audit

- Log which credentials and issuers were used, what policies were applied, and why a decision was taken.

- Use this both for compliance and for improving fraud models over time.

This pattern shifts your systems from identity as a login event to trust as a continuous, data‑driven process.

Trust boundaries for AI agents

As organizations deploy AI copilots and autonomous agents into production, the central question becomes:

What is this agent actually allowed to do, for whom, and under what conditions?

A robust approach draws clear trust boundaries:

-

Agent identity

Each agent instance has its own identifier and credential set (e.g., “FinancePayablesAgent v2.3 for Org X”). - Delegated authority

The human or enterprise that owns the agent issues credentials defining:- Whose data it may access.

- Which systems it may call.

- Transaction limits and approval thresholds.

- Context‑aware constraints

Policy engines evaluate:- The agent’s credentials and model version.

- The risk of the requested action (e.g., “change beneficiary vs. run report”).

- Environmental context (location, device, time, anomaly scores).

High‑risk actions might require:

- Additional human approvals with independent verification.

- Stronger credentials (e.g., from a compliance officer or system owner).

- Execution within constrained sandboxes with pre‑approved prompts and data scopes.

Strategic implication: treat AI agents as first‑class principals in your identity and access management stack—not as anonymous API clients hidden behind a generic service account.

Governance and operating model: beyond technology

Getting the technology right is necessary but not sufficient. To make this work at scale, you need:

- Clear ownership

- Who owns digital identity architecture?

- Who owns trust policy across lines of business?

- How are conflicts between security, product, and compliance resolved?

- Cross‑functional governance

Establish a Digital Trust Council (or similar) that includes:- Security, risk, and compliance leaders.

- Data and AI leadership.

- Product and customer experience owners.

This group defines:

- Trust principles (e.g., “cryptographic verifiability by default,” “minimum disclosure,” “explainable decisions”).

- Standards for credentials, wallets, and agent controls.

- Approval processes for new issuers and trust frameworks.

- Education and culture

Help teams shift mental models:- From “passwords + OTPs are good enough” to “credentials and issuers define trust.”

- From “we know our users” to “we trust what we can verify and explain.”

- From “AI is a black‑box assistant” to “AI is a constrained, credentialed principal.”

A pragmatic roadmap

For most CTOs and technology leaders, the question is not whether to modernize digital identity, but how to sequence it.

A practical roadmap:

- 1: Foundations

- Inventory your highest‑risk user journeys and AI use cases.

- Identify where today’s controls depend on weak signals (documents, static credentials, opaque manual reviews).

- Stand up a cross‑functional Digital Trust Council and define initial principles.

- Pilot verifiable credential and wallet capabilities in a contained, high‑value journey (e.g., high‑value payments, privileged access, or AI agent approvals).

- 2: Scale patterns

- Standardize schemas and issuers for your main identity attributes.

- Integrate credential verification into your IAM and policy engines.

- Introduce agent‑specific identities and constrained authority for key AI workloads.

- Begin capturing structured trust telemetry across systems.

- 3: Ecosystem and automation

- Join or help shape sector‑specific trust frameworks.

- Automate more of your trust policy lifecycle: simulation, testing, and rollout pipelines.

- Extend wallets and credential usage beyond customers to partners, suppliers, and devices.

- Use your trust telemetry to improve fraud models, customer journeys, and AI governance.

In the Gen AI era, trust becomes both more fragile and more valuable. Organizations that treat digital identity as a strategic capability—rooted in credentials, wallets, and institutions—will be able to:

- Move faster with AI while staying within regulatory and ethical guardrails.

- Offer smoother, safer experiences to customers and partners.

- Compete not just on features, but on verifiable trust.

The opportunity now is to architect that trust fabric deliberately—before it is imposed on you by regulators, fraud losses, or the next major breach.